Smart Manufacturing: data that works, not just data that arrives

by Andrea Spinelli, BU Manager Industry 5.0.

The real leap in quality—what separates production that generates numbers from production that generates decisions—lies in the ability to transform raw data into contextualized information. And this transformation requires precise architectural choices, often underestimated.

In today’s industrial landscape, the term ‘Smart Manufacturing’ is often overused, as if it were a sticker to be applied whenever there’s a workshop with a PC interfaced to a machine. For those who experience the factory floor every day, reality is much more physical and raw. The real revolution doesn’t lie in purchasing the latest collaborative robot or in the massive installation of sensors, but in the ability to make machines communicate intelligently, trying to “understand” what they are saying.

Many companies today find themselves in a paradoxical situation: they have machines producing thousands of data points per second, but they can’t even understand what they are producing and at what performance levels. Extracting a signal from a PLC or a vibration sensor is relatively simple. The real technical bottleneck, however, is contextualization.

Receiving an alert indicating a bearing temperature increase to 80°C is just data. Knowing that this peak occurred while the machine was working a high-strength alloy, with a tool at the end of its life and during a shift that started only two hours ago, is information.

The availability of data integrated with contextual information enables maintenance operations to be carried out in order to avoid unexpected production interruptions.

Without context, data is just background noise that generates false alarms and leaves maintenance personnel or operators unable to resolve issues..

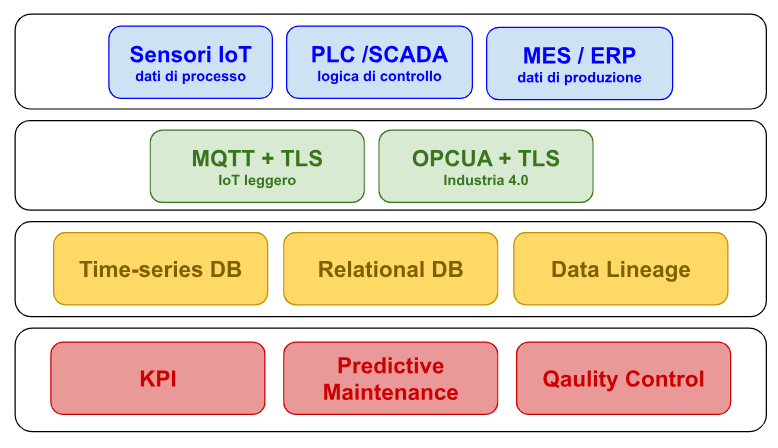

1. The data flow: from machine to decision

We must therefore guide data along a journey toward more intelligent use, use by multivariate statistical analysis, deep learning, and AI. However, this journey has starting conditions that are essential for the speed of the knowledge-building process.

Understanding this flow is fundamental for anyone who wants to design an industrial architecture that is not only connected, but governable. Each level introduces technical decisions that have direct impacts on security, management, and often regulatory aspects as well.

2. The starting foundations

Digitalizing production process control to make it truly data-driven requires companies to make a significant investment of time, resources, and strategic vision. Unfortunately, investment alone is not enough: for the project to deliver measurable economic results (such as reduced machine downtime, decreased scrap rates, containment of raw material waste and energy resources), it is necessary to consciously address a series of choices that, at first glance, may seem purely technical, but have profound repercussions on the organization’s security, governance, and competitiveness.

Choosing how to transmit data, where to store it, who can access it, and how to track its lifecycle are not decisions to be delegated solely to the IT department. These are decisions that determine the quality of what the system is able to deliver: not simple raw inputs, but contextualized information that transforms into knowledge, and knowledge into operational and strategic decisions. It is this chain, from data to decision, that creates the vast gap between a simply connected factory and a smart factory.

Below, I would like to essentially examine the first important link in our data chain, what I would call the raw state of data. Let’s look at 4 fundamental aspects of data: how it reaches us, how we store it, how we provide it to others, and how we track its passage.

2.1 Data transmission

Connecting machines means opening attack surfaces. For this reason, choosing the transport protocol is not a purely technical matter, but also one of risk governance.

MQTT withTLS is today the de facto standard for most industrial IoT devices: lightweight, widely supported, end-to-end encrypted.

OPC UA goes further, integrating authentication mechanisms via x.509 certificates, digital signatures, and encryption directly into the protocol itself, making it the natural choice for Industry 4.0 environments that require interoperability between heterogeneous machines.

2.2 Data storage

As with everything, there is no single database suitable for all IoT scenarios. The choice depends on a precise trade-off between write latency, time-series query capability, scalability, and compliance requirements. For continuous telemetry—temperatures, pressures, vibrations sampled at regular intervals—the most common time-series databases are InfluxDB and TimescaleDB: optimized compression, fast queries over time ranges, configurable retention policies.

2.3 Confidentiality and access control

Protecting industrial data means acting on two distinct fronts: during transit and during storage.

In transit, TLS/SSL encryption covers most application protocols. Where the risk perimeter is broader, industrial VPNs over OpenVPN protect the entire channel between edge and cloud. Once data reaches the database, AES-256 encryption of storage volumes is now considered the acceptable minimum. For particularly sensitive data, such as operator personal data or industrial secrets, column-level encryption allows selective protection of only critical fields, reducing the exposure surface.

On the access control front, the principle of least privilege should be followed, whereby each system, human or machine, accesses only the data strictly necessary for its function

2.4 Data traceability

In smart manufacturing, traceability is not an accessory regulatory issue: it is an operational requirement. GDPR, ISO 27001, NIS2, and IEC 62443 regulations all converge on a common principle: every piece of data must have a verifiable history.

The ultimate goal of this infrastructure is not technology for its own sake, but optimization and control. The Smart Factory is that place where the production manager can see in real time not only whether a machine is stopped, but why it is stopped, automatically comparing historical performance with current performance; or where an anomalous temperature spike is immediately correlated with the batch being processed, the tool mounted, the operator on shift; or again, where compliance auditing is not a manual operation taking weeks, but a query on a system that has recorded everything, always, in a verifiable way.